The AI Moral Panic

Current public sentiment around AI bears many of the hallmarks of a moral panic

Introduction

In the 1800s, the Luddites opposed the adoption of industrial-revolution era automation. They saw new textile machines as a cheap imitation of human workmanship, and were whipped into a furor over the percieved dangers of technology. They committed acts of vandalism bordering on terrorism, and military intervention was necessary to put a stop to their activities.

Today, concerns surrounding AI have produced a similar movement. This group rails against the use of AI. Their fervor seems extreme when compared against the reality of the technology. It appears we are in the middle of a moral panic.

Everyone Seems Scared of AI

For Valentine's day, my wife and I decided to visit a bunch of her former patients. Madalyn used to be a home health physical therapist, which means she visited people who were unable to make it to PT clinics. She formed friendships with a lot of these people, and has maintained a relationship with them even after changing jobs.

Most of Madalyn's patients were elderly, and as they asked me questions about my job and research, I noticed a general fear about AI. I thought this was interesting, since none of the retirees I spoke with were in danger of losing a job.

In January, I presented a 'State of AI' presentation to the Mt. Nebo chapter of the Sons of the Utah Pioneers (a social group composed mostly of elderly people.) That crowd also expressed general worries about the abilities of modern AI systems.

If you watch the news, you're probably constantly bombarded with grandiose statements from tech CEOs that 'AGI will be here in the next 3 years' or 'AI will cure cancer in our lifetimes'. Tech VCs are incentivized to make the broadest, grandest claims they can to drum up support for their startups. This, combined with general economic uncertainty, has people very nervous about job security as AI becomes more capable.

Finally, online discourse creates echo chambers, magnifying worries about job loss, environmental concerns, decreasing human creativity, and other issues with AI.

Moral Panics

A moral panic is a widespread feeling of fear that some evil person or thing threatens the values, interests, or well-being of a community or society. Sociologist Stanley Cohen, who developed the term, describes moral panics as happening when "a condition, episode, person or group of persons emerges to become defined as a threat to societal values and interests". While the issues identified may be real, public discourse "exaggerate the seriousness, extent, typicality and/or inevitability of harm."

Cohen outlines 5 stages of a moral panic:

- Something (or someone) is identified as a threat to society's values, safety, and interests.

- The nature of the 'threat' is amplified by the media, who present the threat through simplistic, symbolic rhetoric. These portrayals appeal to public prejudices, creating an evil in need of control and victims (the moral majority).

- Reports of the threat create a sense of anxiety, outrage, and concern among the public.

- Authorities - editors, religious leaders, politicians, etc. - respond to the 'threat', pronouncing their diagnoses and solutions, including new laws or policies.

- The panic fades, leaving behind stereotypes and misconceptions.

The last two paragraphs were summarized from Wikipedia.

Examples of past moral panics include the Salem Witch Trials, the anti-comic book scare of the '50s, and the Satanic Panic of the '80s and '90s.

The current public sentiment regarding AI bears several hallmarks of a moral panic. Concerns are overblown, overstated, and lead to people unjustly vilifying the perceived threat. (In this case, AI) The future of AI is uncertain and potentially scary, but the current public outrage is disproportionate to the issues posed, and is uniquely moral in character.

What are People Scared of?

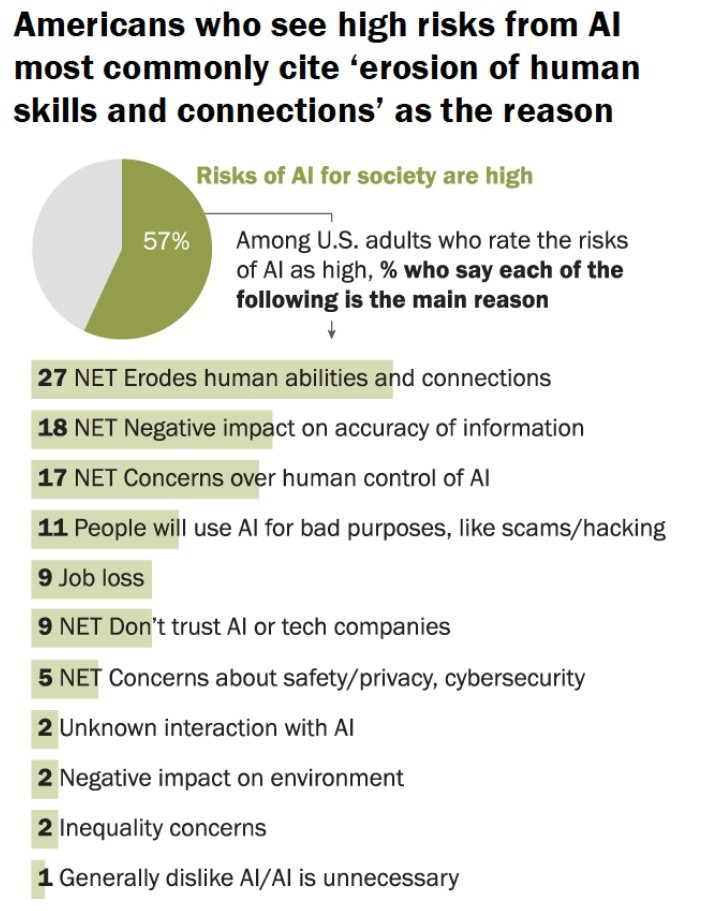

Before we get into that, I need to substantiate my claims about people's fears surrounding AI. I'm not just going off of vibes, I've looked at data from reputable sources to quantify my observations. Pew has done several surveys to understand what it is that most worries Americans about AI.

What I find most interesting about this chart is that by far the biggest concern is about an erosion of human abilities. In the first graph, we see the public is three times more worried about that than economic consequences such as job loss.

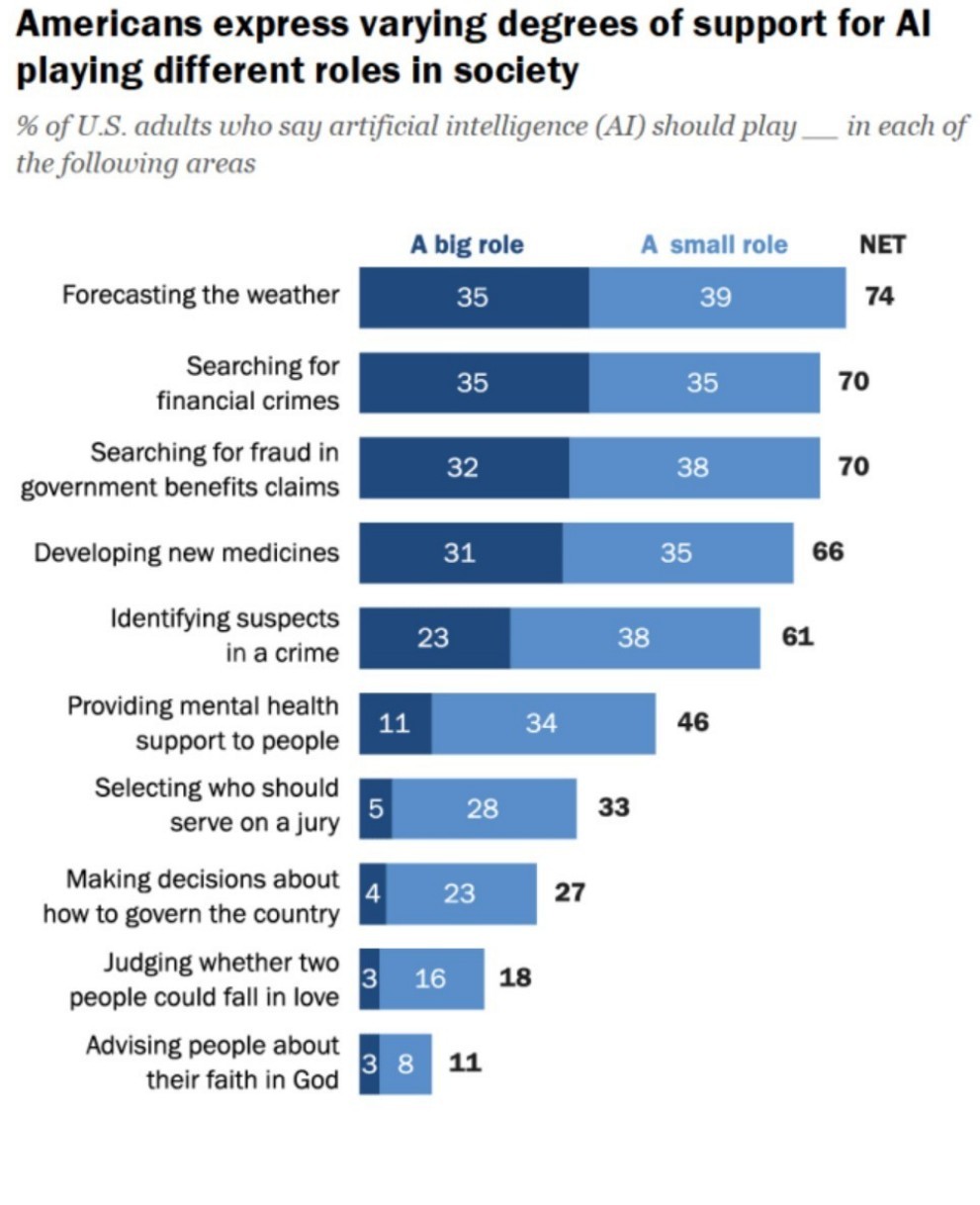

People's opposition to AI seems largely based on some sort of fear of our 'human-ness' being usurped by AI. We see that idea reflected in what people think are acceptable AI use cases.

In the second graph it is clear that people are happy to have AI doing 'machine' tasks, like predicting the weather, or analyzing financial data for trends. However, in uniquely human arenas, like love and religion, people tend to be very opposed to AI.

We see that people are concerned about AI, but how did this develop?

The Background

Before the current panic started, the public was primed to be afraid of capable AI systems. This started with popular books and movies. Isaac Asimov's Foundation series and Frank Herbert's Dune both took place in distant futures after humanity had overcome a species-ending threat posed by artificial intelligence. Cinema took on this trope. Hal, the ship's computer from 2001: A Space Odyssey, turns against his crew. The Terminator franchise featured robots who became too capable and attempted to destroy humanity. The Matrix showed a future where AI had enslaved humanity. More recent movies like Her and Ex Machina explore the dangers of emotional connection to AI.

None of this is new. Ancient Greek mythology features Talos, a bronze man created as a protector by Hephaestus. He was eventually corrupted and driven mad by the sorceress Medea. Jewish folklore speaks of the Golem, a creature formed from clay and given artificial life. In multiple folktales, the golem turns against its creator.

Something in our collective mythology has laid the groundwork for a moral panic around AI, which is well underway. We're in the middle of a moral panic.

Stage 1: AI is Identified as a Threat

Modern fears around AI's capabilities began in the late 2010s with the release of GPT-2, the (then) best text-generation model ever to exist. While GPT-2 is poor by today's standards, academics and journalists alike warned of the dangers of a system that could generate near-human level text. However, this didn't reach the general public.

In late 2022, ArtStation users noticed AI-generated images appearing on the 'trending' page, sparking backlash against AI-generated art. What had previously been seen as a novelty (you can make silly pictures using the computer) was now perceived as theft, as the image generators were trained on pictures scraped from the internet.

As AI 'slop' (low-effort content generated to boost social media engagement) flooded the internet, the 'dead internet' theory began to circulate, positing that most content and engagement on social media was being created by AI. Problems with bots on sites like YouTube and Twitter vindicated the theory to some degree.

Stage 2: Media Reports Amplify the Threat

While Coehn describes traditional media as the main amplifier of moral panics (and this was the case for many historic moral panics), social media was the prime mover for anti-AI sentiment online. Communities based around creative endeavors put no-AI rules in place, and anti-AI slogans or hashtags to bios became a moral litmus test for group members.

At the same time, both traditional and social media began reporting on how AI would replace human workers. Tech companies, driven by a desire to make their products seem as impressive as possible, made exaggerated claims, and CEOs predicted AI doom to drum up interest in their companies and technologies.

Media reports sensationalized several cases, including a 2023 case featuring a Belgian man, and a 2024 case involving an American teenager, both of whom died by suicide after forming strong emotional attachments to AI chatbots.

Stage 3: Reports of the Threat Create Outrage

The public is certainly outraged by the 'threat' of AI. As I use social media, I'm bombarded by anti-AI messaging, whether it be through posts, hashtags, or comments. People hate AI-generated images, video, or content. Even youtube videos I watch about non-AI topics often shoehorn in anti-AI sentiment.

Stage 4: Responses to the Threat

The 2022 ArtStation incident kicked off an anti-AI protest. This was followed by class-action lawsuits in 2023, as well as Hollywood strikes from actors and writers who feared creative displacement from AI. The following years saw violent protests including destruction of Waymo taxis and boycotts of companies that use AI art in their advertisements.

These responses have also taken form as legal action. The EU has passed heavy-handed AI regulation, and several lawsuits have been filed against OpenAI and other AI firms.

Why This is a Moral Panic

As shown by the pew data, the sticking point around AI fears seems very moral in nature. Most anti-AI sentiment is driven by fears of our 'human-ness' being taken away from us. In an era of declining religiosity, many people see humanity as inherently sacred. For many people, a system that displaces essentially human characteristics is tantamount to blasphemy.

The public was really mad when artists began to be replaced by AI image generators. Art is a uniquely human endeavor. Hardly anybody is mad that we've outsourced farming to tractors, but moving a heavy load isn't inherently human. (A camel can carry more weight farther than a person.) The idea of human creativity being replaced by soulless AI has moral significance to many people that approaches religious levels. On the other hand, driving a car is seen as a human task, which is why autonomous vehicles have been targeted in spite of being many times safer than cars driven by humans. Many people have been swept up in a quasi-religious fervor against AI.

In his book, The Righteous Mind, American Social Psychologist Johnathan Haidt argues that humans do not make rational decisions. There's a fair amount of evidence suggesting that we often tend to make gut decisions, and then rationalize them with post-hoc reasoning.

Opponents of AI will sometimes cite concerns like environmental worries (which are likely overblown. Did you know that drying a light load of laundry uses the same amount of energy as over 8,000 ChatGPT queries?) However, these are often post-hoc justifications for an 'icky' feeling about AI.

One hallmark of moral panics is people making false and incendiary claims about the 'threat'. In the Salem Witch Trials, officials claimed that young women were making pacts with the devil. False reports of ritual killings fanned the flames of the Satanic Panic. In both of these cases, people thought they were exposing some great evil, though they were misinformed.

We see something similar today. People (including some very smart people) are making untrue claims about AI. As an illustrative example, consider the following 2016 quote from Geoffrey Hinton (who is one of the foundational minds in deep learning):

"I think if you work as a radiologist, you are like the coyote that's already over the edge of the cliff but hasn't yet looked down... People should stop training radiologists now. It's just completely obvious within five years deep learning is going to do better than radiologists... It might be 10 years, but we've got plenty of radiologists already"

Was he right?

No!

10 years later, the country has more demand for radiologists than it did a decade ago when Hinton made his pronouncement.

What I want to stress about this example is that Hinton is a very smart man, smarter than me. He wasn't completely wrong about the trajectory of AI. AI systems now help radiologists make better diagnoses. However, he was 100% wrong about the downstream effects of that prediction. Claims like this misinform, fanning the flames of the current anti-AI moral panic.

Some AI Concerns are Valid

Just because there is a moral panic surrounding AI doesn't mean that no concerns about AI are valid. While this isn't the focus of this article, I want to outline some valid worries about AI.

-

AI Slop

The internet is filling up with low-effort AI-generated content. Social Media algorithms force it down everyone's throats. While I don't have any data to back this up right now, this seems particularly bad for children and the elderly.

-

Labor Market Concerns

I think that concerns about AI displacing people are overstated, but not fully incorrect. AI is going to change the labor market. Some jobs that exist today will be done by AI in 10 years, and that's not necessarily a bad thing. They used to pay someone to push the buttons in the elevator, and we're okay without that these days. There will be growing pains, however, as we acclimate to the new reality of a workforce equipped with AI.

-

Strain on Local Resources

AI's power and water needs are an oft-cited discussion point against AI. I don't see this as a long-term problem. The world has come together and made remarkable strides in clean and renewable energy over the past few decades. I'm confident we can find good ways to power our AI data centers. However, in the meantime, data centers are going to put strain on local power grids and raise prices for everyone.

-

Malicious Use of AI

AI is an amazing technology that will accelerate industries like medicine. It has the potential to be a potent force for good in the world. On the other hand, bad actors have more tools at their fingertips. I've done research proving that AI can be used at scale for political persuasion. What does that look like in the hands of a hostile government? While I am confident we can overcome this issue as well, it's important to point out the technology's potential for misuse.

-

Emotional Attachment to AI

The thing I am most worried about in our increasingly lonely world is emotional attachment to AI. Both of the tragic suicides I mentioned above happened because of emotional attachment to chatbots. America's population is aging, and more and more people are left alone without human connection. Bereft of real bonds, people are finding emotional connection to AI chatbots that do not and cannot love them back or care for them in return. I see this as an under-discussed topic, and I would mark this as my greatest concern regarding the future of AI.

Conclusion

The worst part about this moral panic is that it prevents people from taking advantage of a transformative technology. I agree that it's irritating to have AI shoved into every application from google search to automatic email writing. However, rejecting AI wholesale is not the solution.

This is especially worrisome when it comes to labor market concerns. Right now, few people are actually losing their jobs to a faceless AI. However, in some sectors, one really productive person who is harnessing AI is able to displace several workers who are not. Rejecting judicious AI use today is shooting yourself in the foot. Tomorrow it could be more like shooting yourself in the head.

New technologies always come with pros and cons. You should take a balanced, nuanced view of AI. Don't get caught up in the moral panic around potential problems, or the hype train predicting an AI-based utopia. As the old saying goes, "Nothing is ever as good or bad as it seems."